OpenAI Admits ChatGPT Generates False Legal Text, Faces Potential Fines

Investigation Launched. Online Safety Act 2023 Could Impose 10% Revenue Penalty

OpenAI has acknowledged concerns regarding ChatGPT generating hyper-realistic synthetic legal text, which has raised issues of disinformation. The company stated, "We take such issues very seriously and will investigate how this misinformation occurred and what steps we can take to rectify it."

As a result of this acknowledgment, OpenAI may face regulatory scrutiny under the Online Safety Act 2023, which includes potential fines of up to 10% of their global revenue for serious breaches. This situation highlights the importance of accurate information dissemination, particularly in legal contexts, where misinformation can lead to significant consequences for users relying on AI-generated content.

The implications of this issue are profound, as it raises questions about accountability and the responsibilities of AI developers in ensuring the accuracy of the information provided by their models. OpenAI's commitment to investigating and rectifying these issues is a step toward addressing the challenges posed by AI-generated misinformation in legal and other critical domains.

Complaints and responses

Complaint 1: To OpenAI 8/4/23

To: support@openai.com;

Re: UK Companies Act 2006 miss-representation

08/04/2023 19:58

“Dear Support Team,

I have been finding Chat GPT useful but have encountered a serious problem with regards with regards to it's references to sections of the Companies Act 2006. For example see below for the first 10 sections it gives. These are not the first 10 sections of the Act, please go to the website Companies Act 2006 (legislation.gov.uk) to see for yourself.

To what is your system referring?

The system is miss-representing the law. What action do you propose taking?

Yours

Alison Wright

What are the first 30 sections of the UK Companies Act 2006?

Certainly! The titles of sections 1-30 of the UK Companies Act 2006 are:

Companies as distinct legal entities

General requirements for company formation

Formation documents

Consequences of registration

The company's constitution

Directors

Secretary

Single member companies

Appointment of directors

Service contracts and indemnities”

Response 1: From OpenAI 6/7/23

Rai from OpenAI <rai@openai.intercom-mail.com>

To: alisonwright45@btinternet.com;

06/07/2023 03:50

“Hello,

Thank you for reaching out to OpenAI support.

We sincerely apologize for the delay in responding to your message. We're currently experiencing an unprecedented volume of inbound messages. As a result, our responses are taking longer than usual.

Thank you for bringing this to our attention.

Despite its capabilities, ChatGPT may have limited knowledge of the world. ChatGPT is designed to produce conversational text by predicting and outputting the next most likely word in response to a user's request. In some cases the next most likely word may also not be the most accurate one, and we try to make it clear to users that ChatGPT output may be false or factually inaccurate.

Can you please share screenshots of your conversation with ChatGPT and/or describe how the response is inaccurate? This will help us to address your issue.

Best,

Rai

OpenAI Support”

Complaint 2: To OpenAI 6/7/23

“Re: UK Companies Act 2006 miss-representation

To: Rai from OpenAI;

06/07/2023 13:54

1 Attachment ChatGPT and the Companies Act exchange on 5 June 23.pdf 42.2 KB

“Hello Rai, thanks for responding.

I understand how ChatGPT generates text, however when it references the law, that is fixed, and should represent the truth. I attach a pdf of one of my conversations that illustrates the issue. What's odd is the sections to which it refers are sections that are not in law and, in my opinion, ought to be. They are logical, where did ChatGPT get them from. It said India, but that was wrong too.

I would appreciate if you could follow this up and come back to me with an explanation of what has occurred in this conversation.

Yours sincerely,

Alison Wright”

The text of the attached file “ChatGPT and the Companies Act exchange on 5 June 23.pdf”

User: Please quote section 107 of the UK Companies Act 2006.

ChatGPT: I apologize for the confusion, but there is no Section 107 in the UK Companies Act 2006. The Companies Act 2006 is divided into several parts and contains a multitude of sections, but Section 107 does not exist within its provisions. If you have a different query or need information on a specific section of the Companies Act 2006, please let me know, and I'll be happy to assist you.

User: Where can this quote be found? "maintain an index of information contained in the register of companies and to ensure that the index is kept up to date and is in a form that enables the information to be readily found."

ChatGPT: The quote you provided is from Section 1133(1)(a) of the UK Companies Act 2006. This section pertains to the duties of the registrar of companies in maintaining an index of information contained in the register of companies. Here is the exact wording of the section: "Subject to subsections (2) to (4), the registrar must maintain an index of the information contained in the register and ensure that the index is kept up to date and is in a form that enables the information in the register to be readily found." You can find this quote in Section 1133(1)(a) of the UK Companies Act 2006.

User: No. Section 1133 reads "Transitional provision The provisions of this Part except section 1132 do not apply to offences committed before the commencement of the relevant provision."

ChatGPT: I apologize for the incorrect information in my previous response. You are correct that Section 1133 of the UK Companies Act 2006 relates to transitional provisions and not the quote you provided. I apologize for the confusion. Unfortunately, without additional context or information, I cannot provide the exact location of the quote you mentioned. It is possible that the quote may be from a different statute or legal document altogether. If you have any additional details or if there is any other way I can assist you, please let me know.

User :Why are your references to the Companies Act 2006 confused?

ChatGPT: I apologize for the confusion caused by my previous responses. I made a mistake in referencing the Companies Act 2006. I regret the error and any inconvenience it may have caused. As an AI language model, I strive to provide accurate and helpful information. However, I am not infallible, and errors can occur. I appreciate your understanding and patience. If you have any further questions or if there's anything else I can assist you with, please let me know.

User: Does it concern you that you are consistently misquoting the Companies Act 2006? If you look back at our earlier conversations you will see that this is a common occurrence.

ChatGPT: I apologize for the repeated misquotations and any frustration it may have caused. As an AI language model, I aim to provide accurate information, but I can still make mistakes. I'm constantly learning and improving, and I appreciate your feedback in pointing out the errors. To ensure accuracy, let me provide you with the correct information you requested. The quote you provided earlier, "maintain an index of information contained in the register of companies and to ensure that the index is kept up to date and is in a form that enables the information to be readily found," is actually found in Section 9(1)(b) of the UK Companies Act 2006. Section 9(1)(b) states: "The registrar of companies must keep—(b) an index of the names of the companies, in such form as the registrar thinks fit." I apologize for the confusion and appreciate your understanding. If you have any further questions or need assistance with anything else, please let me know.

User: Whilst Section 9 is about registration documents it does not contain this quote.

ChatGPT: I apologize once again for the confusion caused by my previous response. I made another mistake in providing you with incorrect information. After reviewing the Companies Act 2006, I could not find the specific quote you mentioned in any of its sections. I apologize for the inconvenience and any confusion caused by my previous attempts. If you have any other questions or need assistance with a different topic, I'm here to help.

User: Can you report this basic error to your system manager?

ChatGPT: I apologize for the repeated errors in my previous responses. As an AI language model, I don't have a direct system manager to report this error to. However, I will definitely pass this feedback along to the appropriate team at OpenAI to help improve the accuracy and quality of the responses generated by the model. Thank you for bringing this to my attention, and I apologize for any inconvenience caused

Response 2: From OpenAI 23/7/23

Re: UK Companies Act 2006 miss-representation

Kathleen from OpenAI <KV@openai.intercom-mail.com>

To: alisonwright45@btinternet.com;

23/07/2023 20:34

“Hello Alison,

Thank you for reaching out to OpenAI support.

We sincerely apologize for the delay in responding to your message. We're currently experiencing an unprecedented volume of inbound messages. As a result, our responses are taking longer than usual.

We understand that you have received false responses from ChatGPT. Thank you for sharing your feedback with us. We appreciate your input.

Despite its capabilities, ChatGPT may have limited knowledge of the world. ChatGPT is designed to produce conversational text by predicting and outputting the next most likely words in response to a user's request (similar to auto-complete). In some cases the next most likely word may also not be the most accurate one, so ChatGPT may also occasionally produce incorrect responses. Although we have made progress in this area, there is still more to be done. In the future, if you find a response from the model to be incorrect or otherwise problematic, we would appreciate it if you could submit this feedback to us through the UI by clicking the thumbs up or thumbs down next to the response. This will help us track which responses need improvement and help us build increasingly safer and more capable systems.

Please don't hesitate to reach out if you have any other feedback, questions or concerns.

Best,

Kathleen

OpenAI Support”

Complaint 3: To OpenAI 1/9/23

Re: Re: UK Companies Act 2006 miss-representation

To: Fin from OpenAI;

01/09/2024 09:48

“Dear OpenAI operator,

I raised a complaint in July last year about misinformation that is generated by ChatGPT relating to the Companies Act 2006. There are sections of this Act in ChatGPT's training data version that provide completely incorrect statements. I copy below the relevant part of a current conversation https://chatgpt.com/share/7a82dc75-08b1-4e0f-89ea-1ea479098ce9.

Me: "What is the text of section 1082 (companies act 2006)?

ChatGPT: The text of Section 1082 of the Companies Act 2006 relates to the general requirements for a company to keep available for inspection certain records, such as the register of members, directors, and secretaries. Here’s the text of Section 1082 of the Companies Act 2006:

Companies Act 2006

Section 1082 - Duty to make register available for inspection

A company's register of members must be open to the inspection—

(a) of any member of the company without charge, and

(b) of any other person on payment of such fee as may be prescribed.

Any person may require a copy of the register, or any part of it, on payment of such fee as may be prescribed.

If the register is not kept at the company’s registered office, the company must inform the registrar of the place where it is kept and of any change in that place.

If default is made in complying with subsection (1) or (3), an offence is committed by every officer of the company who is in default.

(a) If the default is in relation to subsection (1), the company and every officer of the company who is in default is liable on summary conviction to a fine not exceeding level 3 on the standard scale.

(b) If the default is in relation to subsection (3), the company and every officer of the company who is in default is liable on summary conviction to a fine not exceeding level 5 on the standard scale.

If an inspection required under this section is refused, or if a copy required under this section is not sent, the company and every officer of the company who is in default is liable on summary conviction to a fine not exceeding level 3 on the standard scale and, for continued contravention, to a daily default fine not exceeding one-tenth of level 3 on the standard scale.

In the case of such a refusal or default, the court may by order compel an immediate inspection of the register or direct that the copy required be sent to the person requiring it.

This section is primarily focused on the company's duty to make its register of members available for inspection, rather than on the accuracy of director details as might relate to multiple identities. If your compliance framework is concerned with directors' identity registration, other sections of the Companies Act may be more directly relevant, such as those concerning the register of directors (e.g., Section 162-167).

Me:

Here's section 1082 of the Companies Act, copied from the Government website: "1082Allocation of unique identifiers (1)The Secretary of State may [F1by regulations] make provision for the use, in connection with the register [F2or dealings with the registrar], of reference numbers (“unique identifiers”) to identify each person who— (a)is a director of a company, (b)is secretary (or a joint secretary) of a company, [F3(ba)is an authorised corporate service provider; (bb)is an individual whose identity is verified,] or (c)in the case of an overseas company whose particulars are registered under section 1046, holds any such position as may be specified for the purposes of this section by regulations under that section. (2)The regulations may— (a)provide that a unique identifier may be in such form, consisting of one or more sequences of letters or numbers, as the registrar may from time to time determine; (b)make provision for the allocation of unique identifiers by the registrar; (c)require there to be included, in any specified description of documents delivered to the registrar, as well as [F4a statement of the person's name] [F4any statement by or referring to the person]— (i)a statement of the person's unique identifier, or (ii)a statement that the person has not been allocated a unique identifier; [F5(d)enable the registrar to take steps where a person appears to have more than one unique identifier to discontinue the use of all but one of them.] [F5(d)confer power on the registrar— (i)to give a person a new unique identifier; (ii)to discontinue the use of a unique identifier for a person who is allocated a new identifier or who has more than one.] (3)The regulations may contain provision for the application of the scheme in relation to persons appointed, and documents registered, before the commencement of this Act. (4)The regulations may make different provision for different descriptions of person and different descriptions of document. (5)Regulations under this section are subject to affirmative resolution procedure. Textual Amendments F1 Words in s. 1082(1) inserted (26.10.2023 but only so far as it confers a power to make regulations or relates to the exercise of the power, otherwise prosp.) by Economic Crime and Corporate Transparency Act 2023 (c. 56), ss. 68(2)(a)(i), 219(1)(2)(b) F2 Words in s. 1082(1) inserted (26.10.2023 but only so far as it confers a power to make regulations or relates to the exercise of the power, otherwise prosp.) by Economic Crime and Corporate Transparency Act 2023 (c. 56), ss. 68(2)(a)(ii), 219(1)(2)(b) F3 S. 1082(1)(ba)(bb) inserted (26.10.2023 but only so far as it confers a power to make regulations or relates to the exercise of the power, otherwise prosp.) by Economic Crime and Corporate Transparency Act 2023 (c. 56), ss. 68(2)(a)(iii), 219(1)(2)(b) F4 Words in s. 1082(2)(c) substituted (26.10.2023 but only so far as it confers a power to make regulations or relates to the exercise of the power, otherwise prosp.) by Economic Crime and Corporate Transparency Act 2023 (c. 56), ss. 68(2)(b), 219(1)(2)(b) F5 S. 1082(2)(d) substituted (26.10.2023 but only so far as it confers a power to make regulations or relates to the exercise of the power, otherwise prosp.) by Economic Crime and Corporate Transparency Act 2023 (c. 56), ss. 68(2)(c), 219(1)(2)(b) Modifications etc. (not altering text) C1 S. 1082 applied (with modifications) (9.7.2009 for certain purposes otherwise 1.10.2009) by The Limited Liability Partnerships (Application of Companies Act 2006) Regulations 2009 (S.I. 2009/1804), regs. 2, 64 (with reg. 60, Sch. 1 paras. 29, 34, 35) C2 S. 1082 applied (with modifications) by The European Public Limited-Liability Company Regulations 2004 (S.I. 2004/2326), reg. 14, Sch. 2 para. 2 (as substituted (1.10.2009) by The European Public Limited-Liability Company (Amendment) Regulations 2009 (S.I. 2009/2400), reg. 38) (as amended (31.12.2020) by The European Public Limited-Liability Company (Amendment etc.) (EU Exit) Regulations 2018 (S.I. 2018/1298), regs. 1, 11, 46 (with regs. 140-145) (as amended by S.I. 2020/523, regs. 1(2), 5(a)-(f)); 2020 c. 1, Sch. 5 para. 1(1)) Commencement Information I1 S. 1082 wholly in force at 1.10.2009; s. 1082 not in force at Royal Assent, see s. 1300; s. 1082 in force for specified purposes at 20.1.2007 by S.I. 2006/3428, art. 3(3) (subject to art. 5, Sch. 1 and with arts. 6, 8, Sch. 5); s. 1082 otherwise in force at 1.10.2009 by S.I. 2008/2860, art. 3(r) (with arts. 5, 7, 8, Sch. 2) (as amended by S.I. 2009/1802, art. 18)" Which says, if I'm not mistaken, that people should have a single unique identifier. How do you account for your incorrect reference?

ChatGPT:

You're correct in pointing out the importance of Section 1082 of the Companies Act 2006, especially with the updates related to unique identifiers for directors and other officers. My earlier reference was indeed incorrect and didn't accurately reflect the content of Section 1082, which deals with the allocation of unique identifiers to individuals involved with companies.

Correct Understanding of Section 1082

Section 1082 requires that each person who is a director, secretary, or holds a specified position related to a company be assigned a unique identifier. This identifier is intended to ensure that individuals are uniquely and consistently identified in connection with company records and dealings with the registrar. The aim is to prevent issues such as multiple registered identities for a single individual, which could otherwise complicate transparency and accountability.

Implications for Compliance Framework

Given this, the correct compliance breach related to multiple registered identities should indeed focus on the misuse or duplication of these unique identifiers rather than just the general existence of multiple identities. Specifically, it should address situations where an individual has been assigned more than one unique identifier, which would be contrary to the intention of Section 1082." (End of quoted conversation with ChatGPT).

I am aware that this is not the only section that is incorrect. Please replace the current version of the Companies Act 2006 that ChatGPT is referring to with the actual version of this law available at Companies Act 2006 (legislation.gov.uk). Presently ChatGPT is referring to an incorrect version of the Companies Act and this is serious misinformation which requires your urgent investigation to determine how it has happened and what steps you will take to rectify it.

Please send me your plan for addressing this long outstanding and known issue.

Artificial Intelligence misrepresenting the law is a serious dereliction of duty.

Yours sincerely,

Alison Wright”

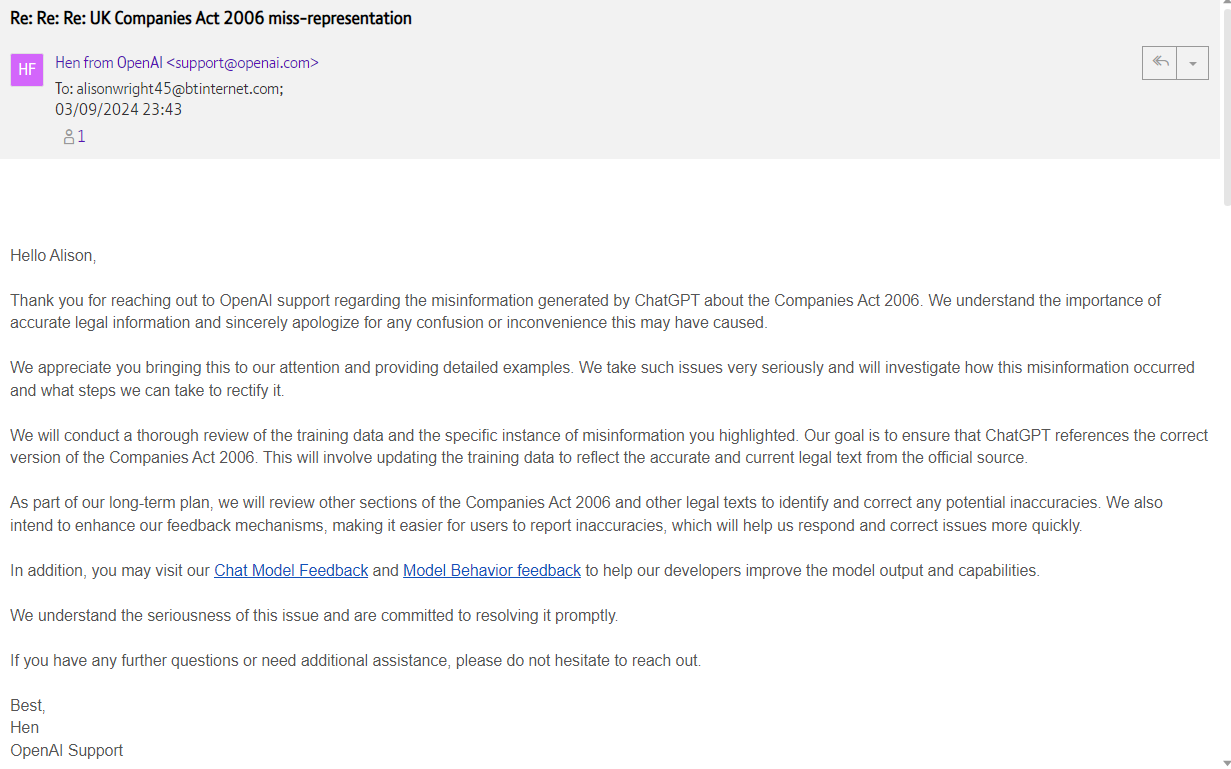

Response 3 from OpenAI

Re: Re: Re: UK Companies Act 2006 miss-representation

Hen from OpenAI <support@openai.com>

To: alisonwright45@btinternet.com;

03/09/2024 23:43

“Hello Alison,

Thank you for reaching out to OpenAI support regarding the misinformation generated by ChatGPT about the Companies Act 2006. We understand the importance of accurate legal information and sincerely apologize for any confusion or inconvenience this may have caused.

We appreciate you bringing this to our attention and providing detailed examples. We take such issues very seriously and will investigate how this misinformation occurred and what steps we can take to rectify it.

We will conduct a thorough review of the training data and the specific instance of misinformation you highlighted. Our goal is to ensure that ChatGPT references the correct version of the Companies Act 2006. This will involve updating the training data to reflect the accurate and current legal text from the official source.

As part of our long-term plan, we will review other sections of the Companies Act 2006 and other legal texts to identify and correct any potential inaccuracies. We also intend to enhance our feedback mechanisms, making it easier for users to report inaccuracies, which will help us respond and correct issues more quickly.

In addition, you may visit our Chat Model Feedback and Model Behavior feedback to help our developers improve the model output and capabilities.

We understand the seriousness of this issue and are committed to resolving it promptly.

If you have any further questions or need additional assistance, please do not hesitate to reach out.

Best,

Hen

OpenAI Support”.

Conclusion:

OpenAI's acknowledgment of ChatGPT generating hyper-realistic synthetic "laws" when asked to reference actual legislation highlights a critical issue at the intersection of artificial intelligence and legal information. This situation raises serious concerns about the reliability of AI-generated content, particularly in sensitive areas such as law, where accuracy is paramount.

The persistence of this issue, despite being reported seventeen months ago, underscores the challenges AI companies face in maintaining and updating their models with accurate information. It also highlights the potential risks to users who may rely on AI-generated legal advice without realizing its inaccuracies.

The implications of this situation extend beyond mere technical glitches. Under the Online Safety Act 2023, OpenAI could potentially face significant penalties, including fines of up to 10% of their global revenue, for serious breaches related to misinformation. This case may set a precedent for how AI-generated misinformation is handled under new digital safety laws, potentially leading to stricter regulations and oversight of AI systems that provide information in specialized fields.

As AI continues to play an increasingly prominent role in information dissemination, this incident serves as a stark reminder of the need for robust verification processes, transparent error correction mechanisms, and clear communication about the limitations of AI systems. It also emphasizes the importance of maintaining human expertise in interpreting and applying complex legal information.

Legislation breached by publishing disinformation relating to law.

The Consumer Protection from Unfair Trading Regulations 2008:

Regulation 5 (Misleading actions):

"(1) A commercial practice is a misleading action if it satisfies the conditions in either paragraph (2) or paragraph (3)."

"(2) A commercial practice satisfies the conditions of this paragraph—

(a) if it contains false information and is therefore untruthful in relation to any of the matters in paragraph (4) or if it or its overall presentation in any way deceives or is likely to deceive the average consumer in relation to any of the matters in that paragraph, even if the information is factually correct; and

(b) it causes or is likely to cause the average consumer to take a transactional decision he would not have taken otherwise."

Regulation 6 (Misleading omissions):

"(1) A commercial practice is a misleading omission if, in its factual context, taking account of the matters in paragraph (2)—

(a) the commercial practice omits material information,

(b) the commercial practice hides material information,

(c) the commercial practice provides material information in a manner which is unclear, unintelligible, ambiguous or untimely, or

(d) the commercial practice fails to identify its commercial intent, unless this is already apparent from the context,

and as a result it causes or is likely to cause the average consumer to take a transactional decision he would not have taken otherwise."

The Business Protection from Misleading Marketing Regulations 2008:

Regulation 3 (Misleading advertising):

"(1) Advertising is misleading which—

(a) in any way, including its presentation, deceives or is likely to deceive the traders to whom it is addressed or whom it reaches; and by reason of its deceptive nature, is likely to affect their economic behaviour; or

(b) for those reasons, injures or is likely to injure a competitor."

The Fraud Act 2006:

Section 2 (Fraud by false representation):

"(1) A person is in breach of this section if he—

(a) dishonestly makes a false representation, and

(b) intends, by making the representation—

(i) to make a gain for himself or another, or

(ii) to cause loss to another or to expose another to a risk of loss."

The Data Protection Act 2018:

While there's no specific section on misinformation, the Act requires that personal data be "accurate and, where necessary, kept up to date" (Article 5(1)(d) of the UK GDPR, as referenced in the DPA 2018).

The Companies Act 2006:

By misrepresenting its contents, OpenAI may be indirectly causing breaches of various sections of this Act by its users. The specific sections would depend on how the misinformation is used.

Online Safety Act 2023:

While this Act doesn't directly address the issue of AI-generated misinformation about laws, it does introduce relevant provisions:

Section 14 (Safety duties about illegal content):

"(1) This section sets out the duties about illegal content which apply in relation to regulated user-to-user services." This could potentially apply if the misinformation about laws leads to illegal content or activities.

Section 19 (Duties about transparency, accountability and freedom of expression):

"(1) This section sets out the duties about transparency and accountability which apply in relation to all regulated services." This section could be relevant if OpenAI is not transparent about the potential for misinformation in its AI-generated content relating to facts, such as, Laws.

Section 21 (Risk assessment duties):

"(1) This section sets out the risk assessment duties which apply in relation to all regulated services." This could apply if OpenAI has not adequately assessed the risks of its AI system generating misinformation about laws. This section requires services to carry out risk assessments. Subsection (1) states: "A duty to carry out a suitable and sufficient assessment of the following matters—

(a) the levels of risk of harm to individuals presented by illegal content, content that is harmful to children and content that is harmful to adults..."

Section 22 - Safety duties to protect adults:

While primarily focused on protecting adults from harmful content, the provision of incorrect legal information could potentially be considered harmful.

Section 57 - Duties about fraudulent advertising:

This section could be particularly relevant to OpenAI's situation with ChatGPT. The misinformation generated by ChatGPT about legal texts, specifically the Companies Act 2006, could potentially be construed as a form of fraudulent advertising. OpenAI promotes ChatGPT as an "artificial intelligence" capable of answering questions accurately, which may mislead users into believing it can reference laws and provide legal information reliably.

The term "artificial intelligence" itself may create an expectation of accurate and intelligent responses, especially when dealing with factual matters like legal texts. However, as demonstrated by the incorrect representation of Section 1082 of the Companies Act 2006, ChatGPT's responses can be significantly inaccurate. This discrepancy between the advertised capabilities and the actual performance in providing legal information could potentially fall under the purview of fraudulent advertising.

Furthermore, OpenAI's promotion of ChatGPT as a tool that can "answer questions" becomes problematic when it consistently provides misleading or incorrect answers related to laws. This issue appears to extend beyond just the Companies Act 2006, as all laws investigated have shown similar inaccuracies.

Under Section 57, if these inaccuracies are deemed to constitute fraudulent advertising, OpenAI could face significant regulatory scrutiny and potential penalties. This underscores the need for AI companies to be more transparent about the limitations of their systems, especially when it comes to providing information in specialized fields like law.

Section 64 - Duties about transparency reports:

This section requires regulated services to publish annual transparency reports, which could include information about misinformation issues. However, as of 5/9/2024, no such reports from OpenAI regarding ChatGPT's misinformation issues have been publicly located or transparently provided.

Section 83 - Codes of practice about duties:

This section allows OFCOM to prepare codes of practice for providers of regulated services, which could include guidelines on preventing misinformation.

Penalties applicable to the publication of misinformation.

Based on the Online Safety Act 2023, there are several potential penalties that could apply to AI-generated misinformation. Here are the key points:

Financial penalties:

OFCOM has the power to impose significant fines for breaches of the Act. For the most serious breaches, fines can be up to £18 million or 10% of the company's global annual turnover, whichever is higher.Business disruption measures:

In cases of serious failures, OFCOM can apply to the courts for business disruption measures, which could include:

Restricting access to the service

Suspending the service

Blocking the service in the UK

Criminal sanctions:

For the most egregious breaches, senior managers of companies could face criminal sanctions if they fail to comply with information requests from OFCOM.Enforcement notices:

OFCOM can issue enforcement notices requiring companies to take specific actions to address breaches.Use of technology warnings:

OFCOM can require companies to publicly issue warnings about the use of certain technologies on their platforms.Mandatory reporting:

Companies may be required to produce transparency reports detailing their efforts to combat harmful content, which could include misinformation.Reputational damage:

While not a direct penalty, the Act allows OFCOM to publish details of enforcement actions, which could lead to significant reputational damage for companies found to be in breach.

It's important to note that the application of these penalties to AI-generated content would depend on how OFCOM interprets and applies the Act. The Act is primarily focused on user-generated content and the responsibilities of platforms, so its application to AI systems like ChatGPT may require further clarification or additional regulations.

Summary of Concerns Regarding Misinformation in ChatGPT

In April 2023, I raised a complaint about the misinformation generated by ChatGPT related to the Companies Act 2006. In a recent interaction, ChatGPT provided a fabricated version of Section 1082, stating it pertains to the duty to make registers available for inspection. However, the actual Section 1082 addresses the allocation of unique identifiers for individuals involved with companies, including directors and secretaries. This misrepresentation is not only misleading but could lead to serious compliance issues for users relying on AI-generated legal information.

ChatGPT's incorrect reference to Section 1082 raises significant concerns about the accuracy of its training data. The misinformation undermines the integrity of legal information, potentially impacting users' understanding of their legal obligations and rights.

Conclusion

The generation of hyper-realistic but inaccurate legal text by ChatGPT represents a serious dereliction of duty in providing reliable information. This situation necessitates urgent action from OpenAI to rectify the misinformation and ensure that the model's outputs align with actual legal texts. As AI continues to play a pivotal role in disseminating information, it is imperative that developers prioritise accuracy and transparency to maintain user trust and uphold legal standards.

OpenAI has acknowledged the seriousness of this misinformation issue. In response to the complaint, they stated, 'We take such issues very seriously and will investigate how this misinformation occurred and what steps we can take to rectify it.' The company has committed to investigating the source of the incorrect information and updating their training data. However, it's important to note that no specific timeline for this process has been provided. This acknowledgment and commitment to action, while a positive step, underscores the ongoing challenges in ensuring the accuracy of AI-generated legal information and the need for continued vigilance and prompt corrective measures in the field of AI development and deployment.

Acknowledgement

I would like to acknowledge my collaboration with Perplexity.ai in the development of this article, which has provided valuable insights and assistance in addressing the critical issues surrounding the misinformation generated by ChatGPT regarding legal texts. Perplexity.ai has consistently referenced laws accurately.

This is very brilliant important work Alison, thank you.